Original post is here: eklausmeier.goip.de

One of the most frequent visitor by far of this website is the ahrefs.com robot. The Ahrefs company, located in Singapore, uses 3,455 servers and stores more than 300 Petabytes.

Ahrefs scans this website even more frequently than Google! Below table shows the monthly visits to this website. It can be seen that Ahrefs scans even more than Google and Semrush combined.

| Date | Ahrefs | Semrush | |

|---|---|---|---|

| 2021.03 | 109 | 51 | 11 |

| 2021.04 | 334 | 121 | 11 |

| 2021.05 | 205 | 1050 | 279 |

| 2021.06 | 58 | 1265 | 814 |

| 2021.07 | 198 | 1161 | 1167 |

| 2021.08 | 788 | 1419 | 1065 |

| 2022.04 | 4128 | 649 | 1577 |

| 2022.05 | 7380 | 1783 | 4368 |

| 2022.06 | 5921 | 942 | 2902 |

| 2022.07 | 7642 | 1373 | 3721 |

| 2022.08 | 10853 | 3421 | 3773 |

| 2022.09 | 8814 | 3122 | 3673 |

| 2022.10 | 11148 | 1562 | 6903 |

| 2022.11 | 11153 | 1622 | 8176 |

| 2022.12 | 11646 | 2417 | 4178 |

| 2023.01 | 13171 | 2086 | 1963 |

| 2023.02 | 14753 | 4057 | 613 |

| 2023.03 | 14130 | 5143 | 1324 |

| 2023.04 | 11506 | 5652 | 1535 |

Above table can be presented in below graph.

Ahrefs offers different plans. I just registered for the so called "free" plan. All below diagrams were extracted from the free plan. Below plan prices are given in EUR per month.

| Plan | Free | Lite | Standard | Advanced | Enterprise |

|---|---|---|---|---|---|

| Cost/EUR | Free | 89 | 179 | 369 | 899 |

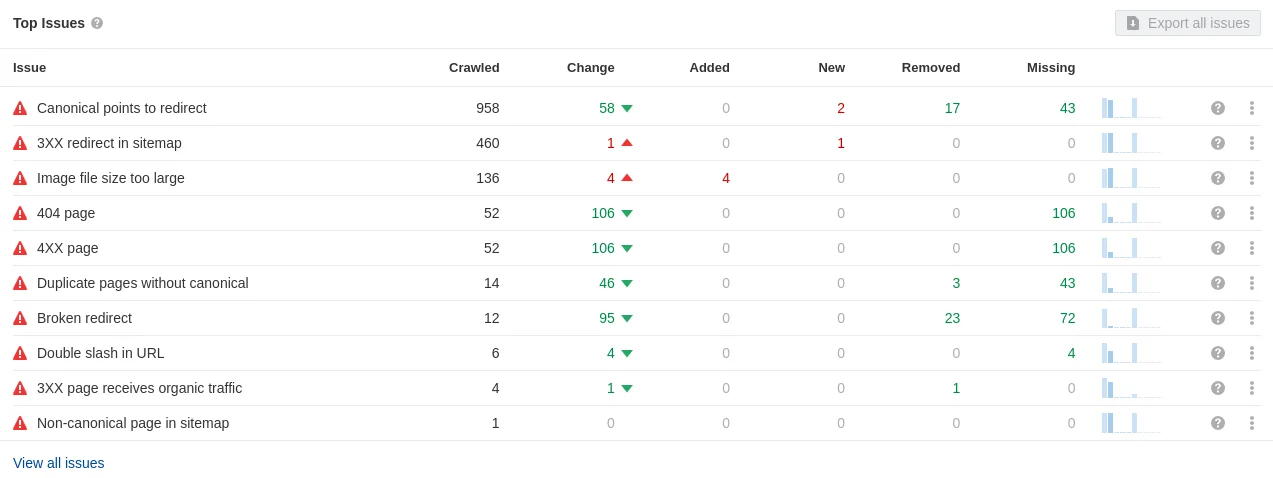

Below table shows a handy overview of your issues. A very common issue are 404-pages.

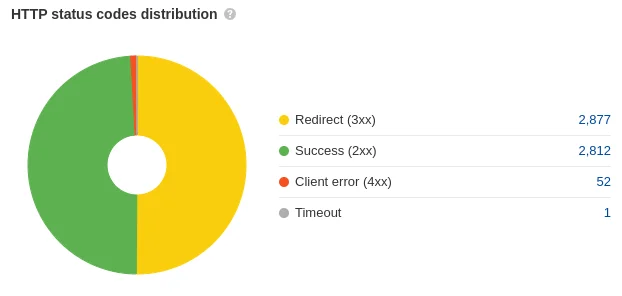

A nice diagram of distribution of your HTTP status codes.

A nice introduction to resolving issues on your website: A Simple Workflow in Ahrefs' Site Audit.

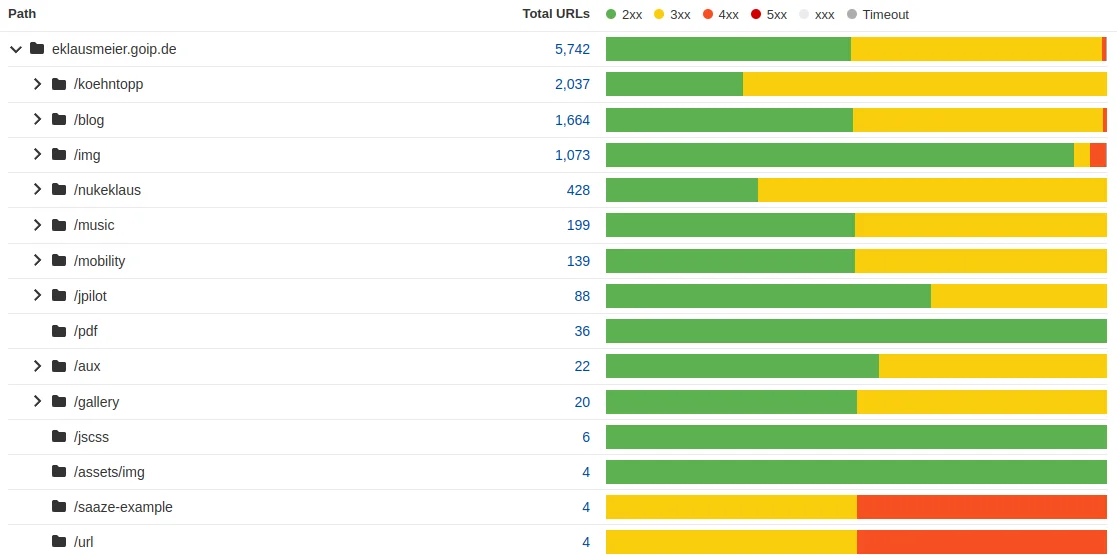

Distribution of HTTP status codes for each "directory" of your website.

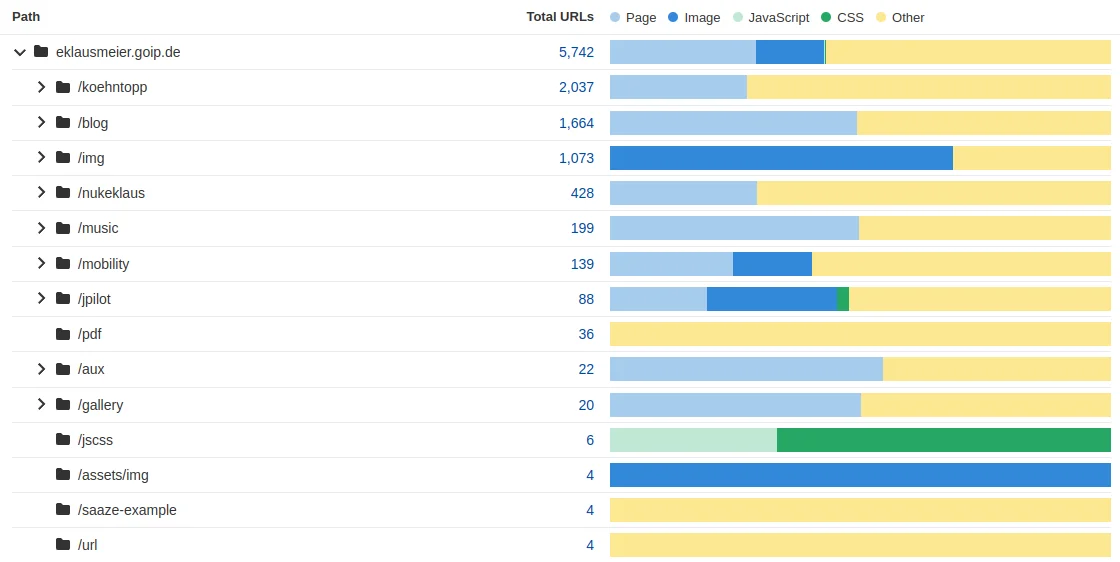

Same as above, but this time a distribution of content-types of your directories of your website.

In above report you can expand the blog part to get detailed information on this path.

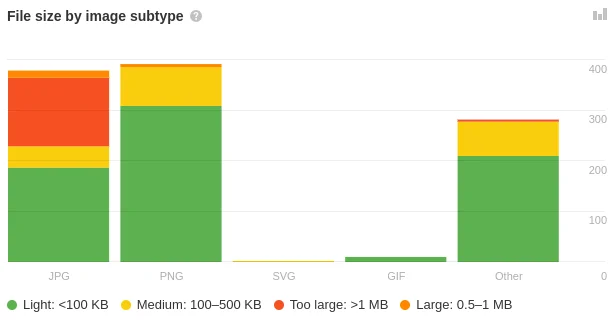

Distribution of image file sizes. Again, you can drill down from here.

A report on issues with your images on your website.

Addendum: This site is hosted by Hiawatha webserver. Extracting specific robots from Hiawatha access.log was done with below Perl program.

1#!/bin/perl -W

2# Count Ahrefs, Google bots per month per year in Hiawatha access.log files

3# Elmar Klausmeier, 22-Apr-2023

4

5use strict;

6

7my %H = ( ahrefsbot => 0, googlebot => 0, semrushbot => 0 );

8my ($year,$month) = (-1,"Illegal");

9my %monthNames = (

10 Jan => 1, Feb => 2, Mar => 3, Apr => 4, May => 5, Jun => 6,

11 Jul => 7, Aug => 8, Sep => 9, Oct => 10, Nov => 11, Dec => 12

12);

13

14sub prtH(@) {

15 printf("%4d.%02d:",$year,$monthNames{$month});

16 for my $i (sort keys %H) {

17 printf("\t%d",$H{$i});

18 $H{$i} = 0; # clear sums

19 }

20 print "\n";

21}

22

23while (<>) {

24 my @F = split /\|/;

25 next if ($#F < 6); # need UA in $F[6]

26 # Hiawatha date field is like: Sun 16 Apr 2023 11:13:13 +0200

27 my ($weekday,$day,$mon,$yr,$hms,$ds) = split(/ /,$F[1]);

28 #printf("day=%s, mon=%s, yr=%s\n",$day,$mon,$yr);

29 if ($year == -1) { ($year,$month) = ($yr,$mon); }

30 elsif ($month ne $mon) { prtH(); ($year,$month) = ($yr,$mon); }

31 for ( split(/[ :;,\/\(\)\@\$]/,lc $F[6]) ) {

32 if (defined($H{$_})) { $H{$_} += 1; last; }

33 }

34}

35prtH();

Finally, concatenating all access.log files, and pipeing through above script:

1blogconcatlog 77 | blogahrefs

Added 15-May-2023: One disadvantage of Ahrefs is that accessing their site can be difficult at times. Below check for humans loops forever.